Sociotechnical and Algorithmic Justice

The lack of fairness in AI applications has been repeatedly demonstrated in prior research. For instance, decision support systems for credit loan applications were found to favor certain socio-demographic groups in a disproportional way. As a consequence, people living in certain areas, those with a specific ethnic background, or women were less likely to obtain a loan from the bank. This can prevent whole neighborhoods from improving their standard of living and cause further economic and societal problems, thus reinforcing existing imbalances.

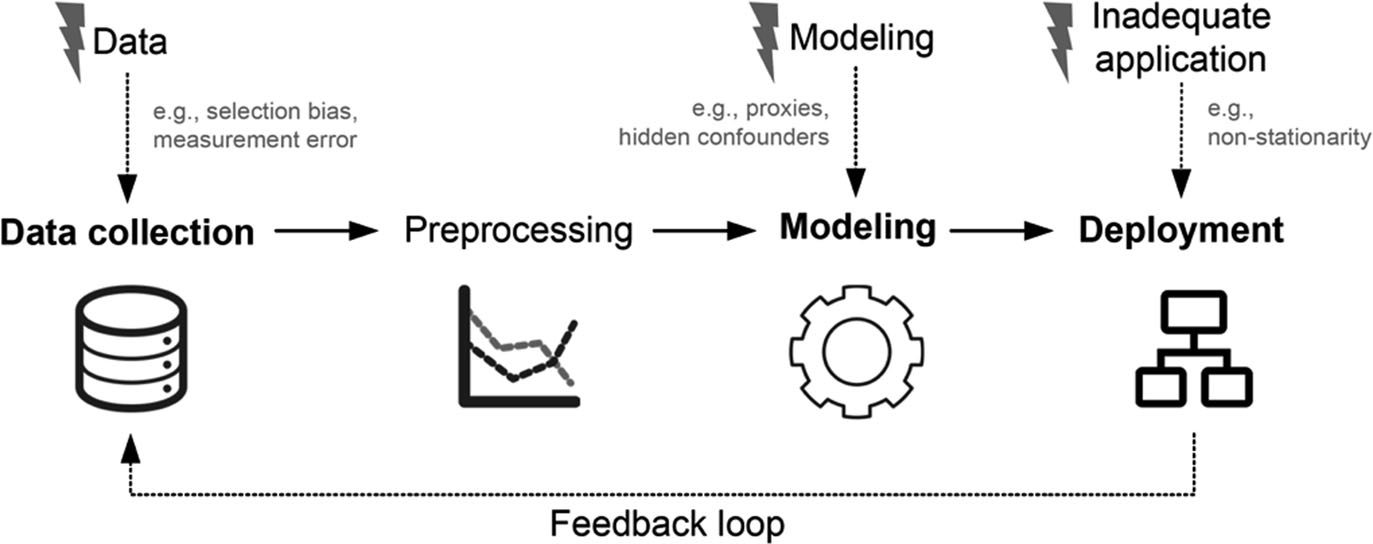

The discrimination might emerge not only through the use of unbalanced data or because of the mathematical attributes of particular algorithms, but also as a consequence of inadequate or unclear selection of data, implicit biases in the data, inappropriate application or interpretation of the outcome, biases inherent to natural language processing or usage of historic data, as well as insensitivity to proxies.

Still, AI applications offer great advantages to businesses. They can improve the efficiency of various decision processes, take over repetitive or monotonous tasks, and offer new insights to the management. The applications are very broad and so are the risks.

We put forward a new perspective on justice as a sociotechnical phenomenon co-created by humans and machines. As members of the SNF-funded project Governance and legal framework for managing artificial intelligence (AI), we use the proposed perspective to study notions of justice in lab experiments and in the field. We explore the perceptions of justice at individual and collective level trying to understand and conceptualize the processes leading to those perceptions. Those insights will inform future development socio-algorithmic decision processes to prevent human bias as well as algorithmic discrimination.

Partners

LMU Munich, Institute of Artificial Intelligence (AI) in Management (Prof. Dr. Stefan Feuerriegel)

University of St. Gallen, Chair of Controlling / Performance Management (Prof. Dr. Klaus Möller, Prof. Dr. Maël Schnegg)

University of Zurich, Chair of Public Law and Digitalization, Health Law and Regulatory Sciences (Prof. Dr. Kerstin Noëlle Vokinger)