Knowing how hard a software development task is as it is being performed can be helpful for many reasons. For instance, the estimate for completing a task might be revised or the likelihood of a bug occurring the source code might be predicted. Furthermore, a real time task difficulty classification could be used to stop software developers when they experience difficulties and prevent them from introducing bugs into the code.

We investigated the use of psycho-physiological data, such as electroencephalographic (EEG) activity and electro-dermal activity (EDA) to determine task difficulty. In an exploratory study with 15 professional software developers, we recorded psycho-physiological data while the study participants were working on six to eight code comprehension tasks with a varying level of difficulty. After cleaning the recorded data we extracted 18 features from eye tracking data, 31 features from EEG data and 7 features from EDA data. These features were used in a machine learning approach to predict nominal task difficulty (easy/difficult). The machine learning approach was used to make three different types of predictions: by participant, by task and by participant-task pair. The by-participant classifier would be the most useful in practice – trained on a small set of people, it could be applied to any new person doing new tasks and still accurately assess task difficulty. Next, in utility, is the by-task classifier; when trained on people doing a set of tasks, it would work well when applied to one of those people doing any new task. Finally, the by-participant-task pair classifier shows that trained on a set of people doing programming tasks, it can predict the difficulty of the task as perceived by one of those people doing a task that the rest already did.

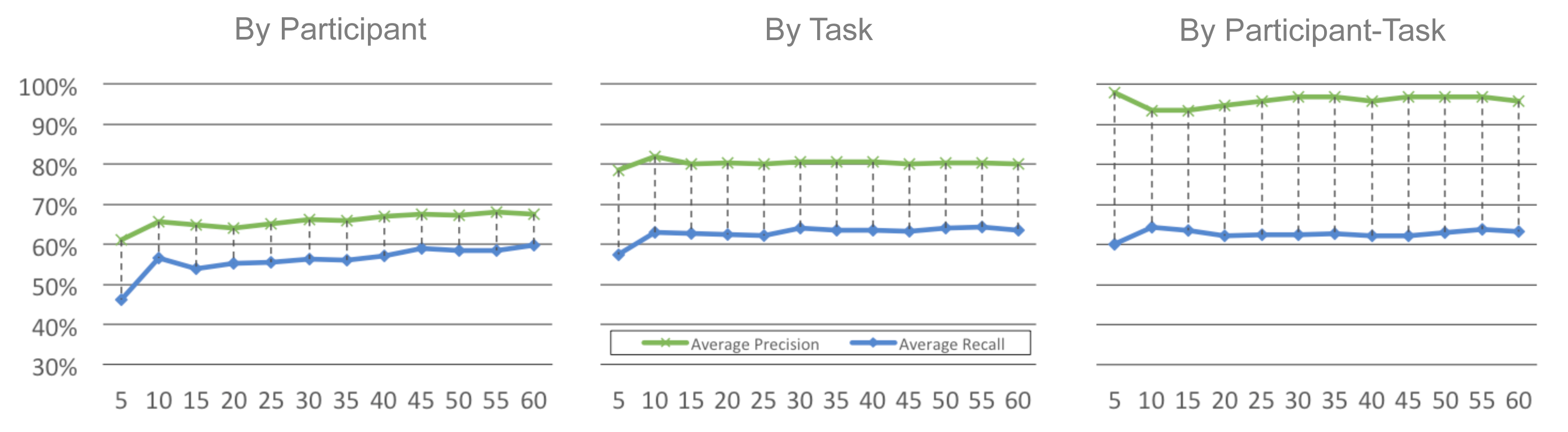

The results show that we can predict task difficulty for a new developer with 64.99% precision and 64.85% recall, and for a new task with 84.34% precision and 69.79% recall. The results also show that we can improve the Naive Bayes classifier’s performance if we train it on just the eye-tracking data over the entire dataset.

To create a classifier that is usable before a developer finished his task and that would adjust to his changing psycho-physiological conditions, we divided the collected data up into sliding time windows. We choose sliding time windows of sizes from 5 seconds to 60 seconds, sliding 5 seconds between intervals. As shown in the figure below, there appears to be no major differences in the performance of the classifier over the various time windows:

Our results demonstrate that we can train a Naive Bayes classifier on short or long time windows with a variety of sensor data to predict whether a new participant will perceive his tasks to be difficult with a precision of over 70% and a recall of over 62%.

The results of this exploratory study have been published at the 36th International Conference on Software Engineering taking placing in Hyderabad, India. The paper is available online.